|

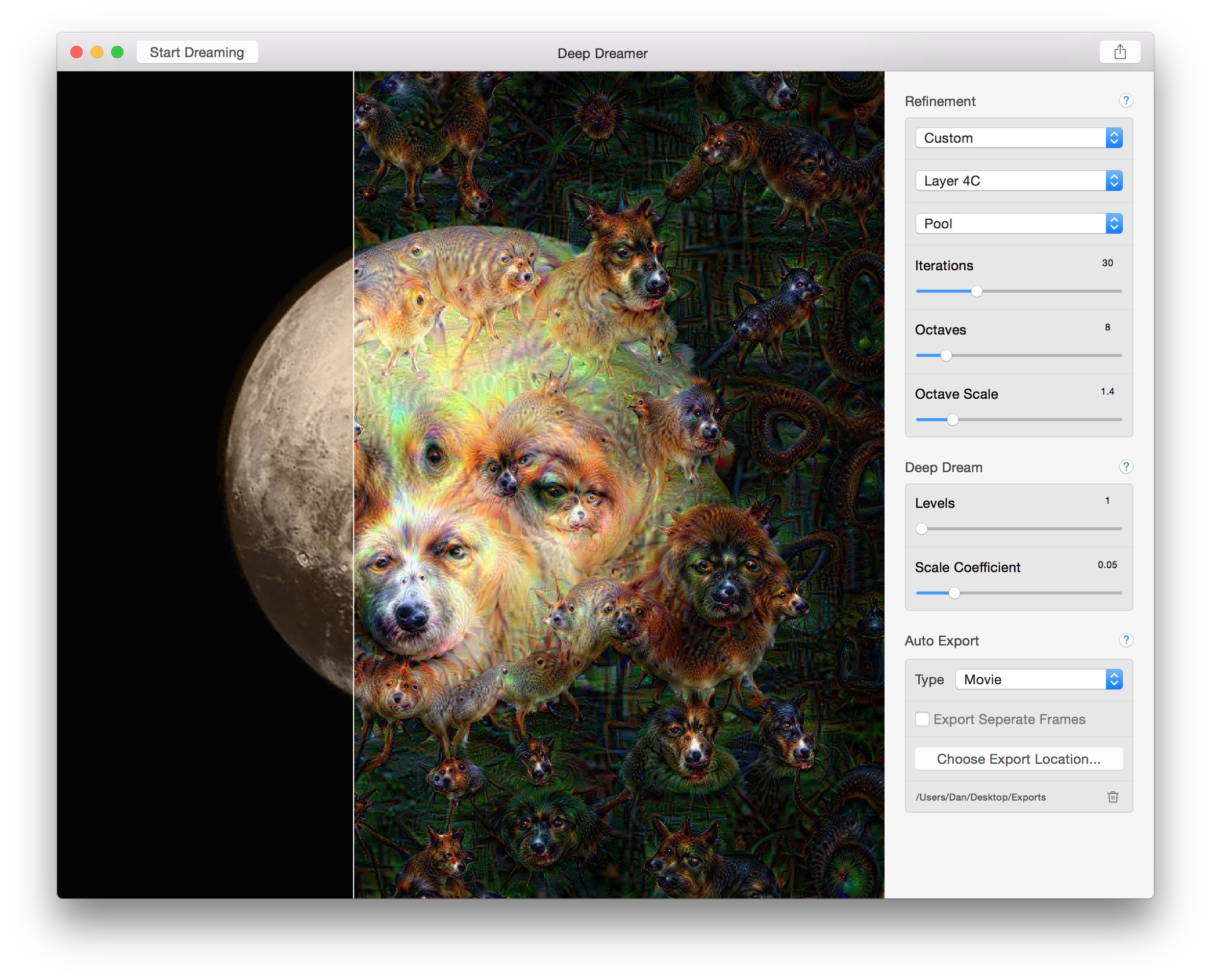

8/12/2023 0 Comments Running deep dreamer on windows

Let's Enhance is an AI-powered image upscaling app that recently added an art generator. Pricing: Free for 10 credits/month and watermarked images from $12/month for 100 credits/month Generative Adversarial Networks (GANs), like VQGAN-CLIP, BigGAN, and StyleGAN, which have been around for a few years longer.īoth kinds of models can produce great, realistic results, though diffusion models are generally better at producing weird or wild images.ĪI art models: Doesn't say, but appears to be Stable Diffusion-based There are two leading kinds of models:ĭiffusion models, like Stable Diffusion, DALL♾ 2, Midjourney, and CLIP-Guided Diffusion, which work by starting with a random field of noise, and then editing it in a series of steps to match its understanding of the prompt. The next step for the AI is to actually render the resulting image. Different art generators have different levels of understanding of complex text, depending on the size of their training database. This allows them to learn the difference between dogs and cats, Vermeers and Picassos, and everything else. To do this, the AI algorithms are trained on hundreds of thousands, millions, or even billions of image-text pairs. Since your prompt can be anything, the first thing all these apps have to do is attempt to understand what you're asking. They use computers, machine learning, powerful graphics cards, and a whole lot of data to do their thing.ĪI art generators take a text prompt and, as best they can, turn it into a matching image. But it turns out AI art generators don't work using magic.

And it may also serve as an indication of the direction of the future Internet: towards automatic metadata description and (one could guess) hooking that metadata into a larger linked-data-type environment that continues to grow and describe new objects.The first time you enter a prompt into an AI art generator and it actually creates something that perfectly matches what you want, it feels like magic. The technology could kick off a conversation about the ethics of artificial intelligence, I suppose. “What does this have to do with libraries?” you might ask. Inside an artificial brain from Johan Nordberg on Vimeo. It really gets weird at about minute 1:30. The video is silent so maybe queue up some Pink Floyd before starting. But the image is also constantly zooming in, so the image keeps changing and morphing in a nightmarish, psychedelic way. Every four seconds a new layer of Google’s program is engaged to recognize and enhance features within the image. The below video was created by starting with an image filled with random noise. Which is kind of a cool name, and evocative, but most of the applications look more like nightmares. Google released this program under the name Deep Dream. (So if the program was given an image of a cloudy sky and one of the clouds looked a little like a bird, the program would make that cloud look more like a bird.) That newly enhanced image was then run through the program again so that the feature (e.g. Once identified, the program was asked to enhance that feature. Designers at Google took a random image and asked the program to identify features within that image. The Google Artificial Neural Network can take image recognition to strange new levels, though, if engaged in a loop. The image is passed through these layers one-by-one and by the final output layer the program should have an “answer” as to what features are included within the image. edges within images, or orientation of shapes within images).

Each layer looks at a certain aspect of the image (e.g. When you feed a new image into the program, the program puts that image through a series of layers. The program is “trained” by looking at a huge collection of reference images that show the shape of buildings, or trees, or bugs, or dogs, etc. Google recently shared its artificial intelligence program for image recognition called Artificial Neural Networks. Facebook has a technology called DeepFace to recognize faces in the photos its users upload in order to suggest tags, for example. One step in the pursuit of artificial intelligence is to have computers recognize features within images.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed